Friday 17 April 2026, 10:03 AM

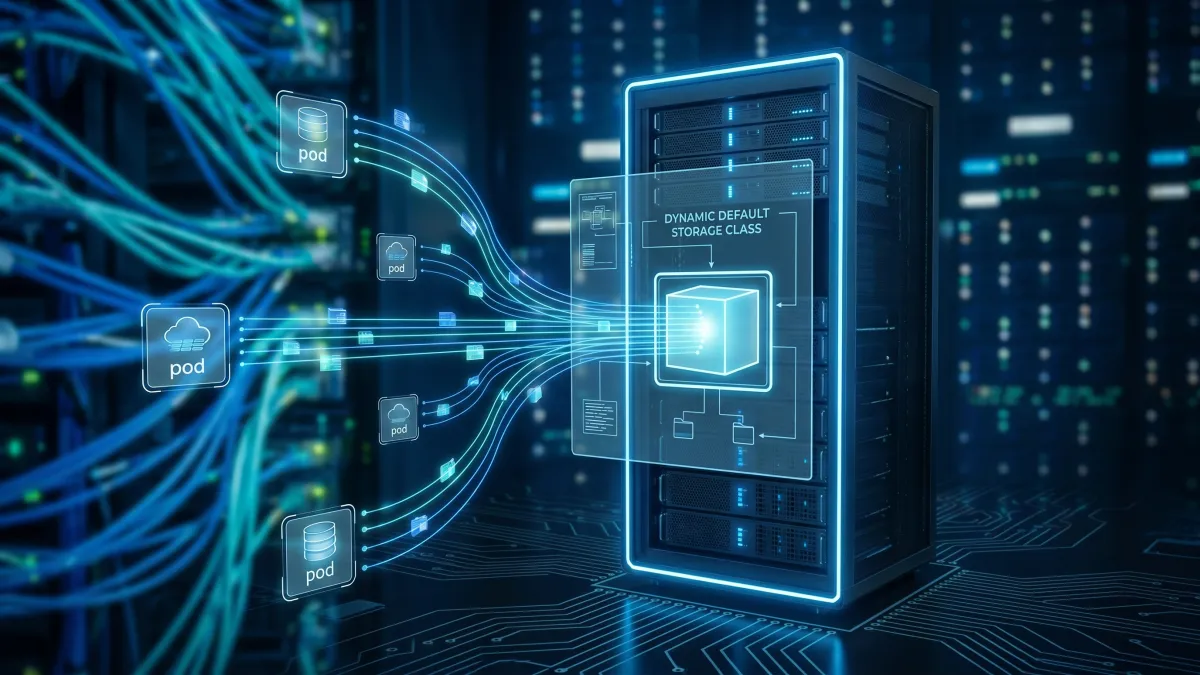

Automating GKE volume scheduling with the new Dynamic Default Storage Class

Discover how GKE's new Dynamic Default Storage Class automates volume scheduling, seamlessly switching between Hyperdisk and Persistent Disk based on hardware.

If you’ve spent any time managing stateful workloads in Kubernetes over the last decade, you know the exact flavor of headache that comes with storage provisioning. Historically, running mixed-generation clusters meant dealing with a tangle of manual scheduling rules. We’d spend hours writing complex taints, tolerations, and node affinity configurations just to ensure a Pod requesting a next-generation disk didn't accidentally get scheduled on an older, incompatible VM. It was a tedious, high-friction process that often left platform engineers burnt out and developers waiting.

That’s why I’ve been closely following a recent update to Google Kubernetes Engine (GKE). The introduction of the Dynamic Default Storage Class fundamentally changes how we handle volume scheduling, and more importantly, it makes the entire developer experience vastly more intuitive.

Making storage scheduling intuitive

To really understand the impact here, we have to look at the user experience of the platform engineers managing these clusters. Upgrading multi-year infrastructure typically requires refactoring application configurations and navigating a minefield of potential downtime.

Starting with GKE version 1.35.0-gke.2232000, administrators can now enable automatic disk selection simply by setting the type parameter to dynamic within their StorageClass manifests. This single toggle triggers an automated, hardware-aware provisioning logic. Instead of forcing teams to rewrite their GitOps pipelines to accommodate new hardware, the platform abstracts that complexity away. It decides what storage fits best based on the underlying node's compatibility. For teams trying to scale quickly, removing this operational toil is a massive win for productivity.

Bridging the gap between legacy and next-gen disks

This dynamic selection acts as a bridge between two very different storage architectures. On one side, we have legacy Persistent Disks (PD), where performance is shared across attached volumes and scales linearly with gigabyte capacity. On the other, we have Google's newer Hyperdisk technology.

Hyperdisk is a game-changer because it decouples capacity from performance. It allows us to independently tune exact IOPS and throughput levels—hitting up to 2.4GBps throughput and 160,000 IOPS for Hyperdisk Balanced—regardless of the actual storage size.

But mixing these in a cluster used to be risky. Thankfully, GKE version 1.34.1-gke.2541000 introduced the use-allowed-disk-topology parameter. When set to true, it directly integrates storage provisioning with the Kubernetes scheduler. The system will strictly refuse to schedule volumes provisioned via the dynamic StorageClass onto incompatible node pools running older versions. From a usability standpoint, this is exactly what we want: a system that proactively prevents silent mounting failures and degraded application states before they happen.

The safety net of automated fallbacks

I always look for how a system behaves when things don't go according to plan. The Dynamic Default Storage Class features a native, automated fallback architecture that acts as a brilliant safety net. If the scheduler tries to place a workload on an older VM generation that lacks Hyperdisk support, the Container Storage Interface (CSI) driver doesn't just crash. Instead, it automatically provisions a legacy disk variant specified in your fallback pd-type parameter (like pd-balanced).

This ensures uninterrupted deployment, which is critical for high availability. However, we do need to be cautious about the performance asymmetry this creates. Because workloads might automatically fall back to legacy Persistent Disks on older nodes, an application that desperately needs dedicated, tunable IOPS could suddenly experience noisy neighbor latency. Additionally, aggregate VM-level limits still apply, meaning multiple high-performance Hyperdisks on a single node could face proportional throttling. It’s a beautifully designed abstraction, but operators still need to keep an eye on their telemetry to ensure applications are getting the resources they expect.

Doing more with less using storage pools

What makes this automated scheduling even more practical is how it integrates with Hyperdisk Storage Pools, a feature that rolled out back in GKE 1.29.2.

By combining dynamic hardware selection with storage pools, teams can purchase capacity and performance upfront and share it across multiple disks via thin provisioning. Google Cloud estimates this approach can reduce storage-related Total Cost of Ownership (TCO) by 30% to 50%. Making high-performance storage more financially accessible is incredibly important, especially for leaner startups trying to optimize their runway without sacrificing user experience.

Ultimately, this update is a perfect example of what happens when cloud providers focus on the human element of infrastructure. By automating volume scheduling and providing seamless fallbacks, GKE is letting us spend less time wrestling with hardware compatibility and more time actually building.

References

- https://docs.cloud.google.com/kubernetes-engine/docs/concepts/hyperdisk

- https://cloud.google.com/blog/topics/inside-google-cloud/whats-new-google-cloud

- https://docs.cloud.google.com/kubernetes-engine/docs/how-to/persistent-volumes/hyperdisk

- https://docs.cloud.google.com/kubernetes-engine/docs/troubleshooting/storage

- https://cloud.google.com/blog/products/containers-kubernetes/hyperdisk-balanced-for-gke-now-available

- https://dev.to/latchudevops/part-27-google-cloud-hyperdisk-and-storage-pools-a-hands-on-guide-3ig8

- https://cloud.google.com/blog/products/containers-kubernetes/gke-features-to-optimize-resource-allocation

- https://cloud.google.com/blog/products/storage-data-transfer/hyperdisk-storage-pools-optimizes-gke-block-storage/