Sunday 1 March 2026, 07:41 AM

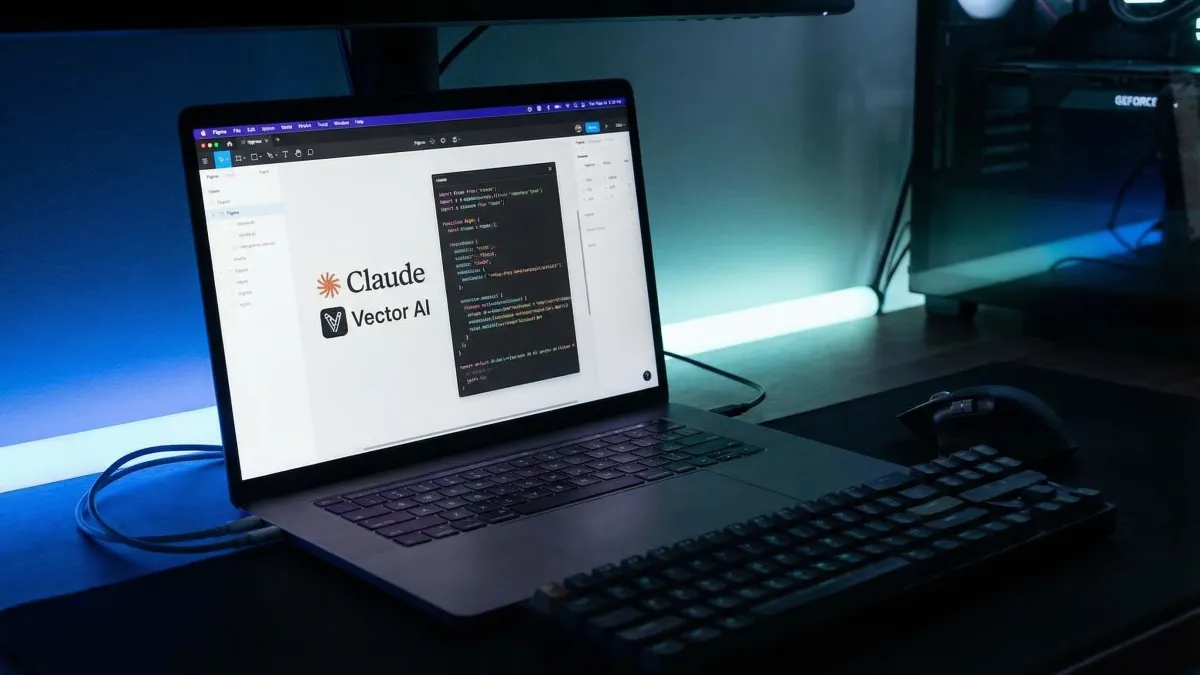

Figma launches the February 2026 update with Claude Code integration and native AI vectorization

Figma's February 2026 release introduces Claude Code integration for design-to-code workflows and native AI tools for image vectorization and background removal.

I’ve spent the last decade in the Bay Area watching tools promise to bridge the gap between design and development. It’s the oldest cliché in our industry: designers dream in pixels, developers think in logic, and somewhere in the middle, the user experience gets compromised by a bad handoff.

For a long time, I was skeptical that AI would be the glue to finally fix this. Too often, "AI features" feel like vaporware bolted onto legacy software just to pump the stock price. But after spending some time with Figma’s February 2026 release, I’m willing to admit that the landscape is actually shifting. This isn't just about generating pretty pictures; it’s about scalability and the fundamental accessibility of our workflows.

Closing the loop with Claude Code

The headline feature here is the bidirectional integration with Claude Code, and for an aspiring founder like myself, this is the kind of practical innovation that actually saves weekends.

Figma has introduced an integration via an MCP server that allows us to import live web pages and AI-generated UI states directly into editable Figma frames. That word—editable—is doing a lot of heavy lifting. In the past, importing a build back into design usually meant getting a flat screenshot that was useless for iteration.

Now, we are looking at a workflow where the "source of truth" is fluid. I can take a live build, pull it back into the canvas, and tweak the UX based on real-world constraints without rebuilding the component library from scratch.

They’ve also updated Figma Make with a model picker for Claude 4.6 Sonnet and Opus. From a user experience perspective, having access to these specific models within the design environment reduces context switching. We aren't tab-switching to a chat interface to generate code snippets; we are staying in the flow state. It makes the technical side of design feel less intimidating and more intuitive for pure creatives.

Native vectorization is an accessibility win

While the code integration grabs the headlines, the new "Vectorize" feature is the quiet hero for day-to-day usability. Figma can now natively convert raster images into editable vector paths.

I have lost count of how many times I’ve received a logo or an icon asset as a low-res PNG, forcing me to either trace it manually or jump into a different suite like Illustrator just to make it scalable. That friction is gone.

But let’s look at this through the lens of accessibility. Scalability is a core tenant of accessible design. When we rely on rasters, we risk pixelation when users zoom in or when interfaces scale across massive displays. By democratizing the creation of vectors—making it a one-click native process—Figma is making it easier for designers to ensure their assets are crisp and legible for every user, on every device. It’s a small technical shift with a massive impact on the end-user experience.

Designing with real data

Finally, I want to touch on the new connectors for Figma Make. We can now pull ecosystem data directly from Amplitude, Dovetail, and zeroheight.

This appeals to the pragmatist in me. We often design for the "happy path"—the ideal user flow where nothing goes wrong. But real users are messy. By pulling in qualitative data from Dovetail or usage metrics from Amplitude directly into the design file, we can design for reality.

If I can see right on my canvas that 40% of users are dropping off at a specific modal, I can iterate on that UX immediately. It grounds the creative process in data, ensuring we aren't just making things look good, but making them work for the people actually using them.

The verdict

Figma’s latest update feels like a maturation. We are moving past the "wow" phase of AI and into the "how does this actually help me build?" phase.

By lowering the barrier between code and design, and by baking scalability tools directly into the interface, they are respecting the user’s time and intelligence. For those of us building the next generation of products in San Francisco and beyond, this is exactly the kind of toolkit we need.